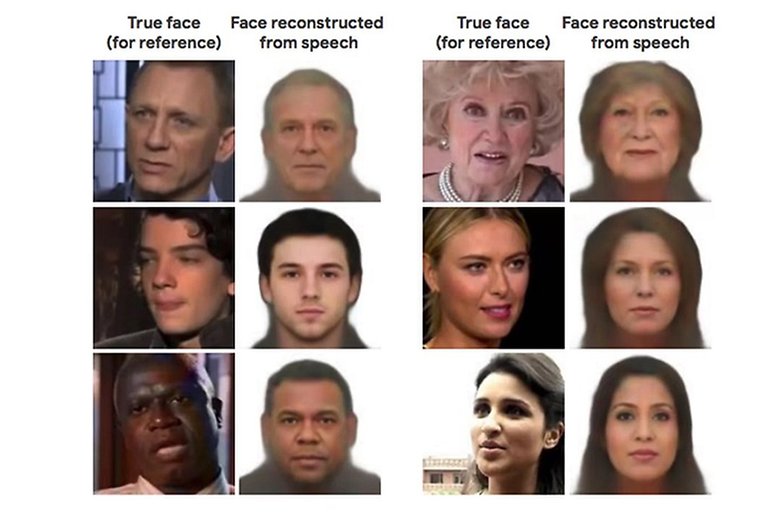

An AI algorithm can draw faces just from people's voices

We have all heard the voice of an unknown person, and made an imaginary portrait of that person in our minds, with varying degrees of success. Now, an algorithm is doing the same experiment. But accurate is it?

- San Francisco is first city in the US to ban facial recognition surveillance

The algorithm in question is called Speech2Face. A group of scientists trained the neural network using millions of videos located on the network, in which more than 100,000 people can be heard talking. According to what was written by the researchers in their study, the algorithm used the Speech2Face data to develop associations between vocal lines and certain physical features of the human face. Later, the AI began to make portraits of various people using only their voices as a reference.

The results of the research were uploaded to the network on May 23, in the pre-publication arXiv. However, such data have not yet been contrasted by other scientists working in the same field.

But how accurate is the algorithm? We can say that, (fortunately), AI still cannot identify individuals solely on the basis of samples of their voices. Rather, the neural network identifies traits associated with certain factors, such as gender, age, and ethnicity, but these traits are shared by a considerable number of people. Therefore, the images generated are more of an "average" than accurate individual portraits.

That said, Speech2Face has generated portraits of astonishing accuracy, but has also shown certain weaknesses when confronted with language and/or pronunciation variations. For example, the AI produced two totally different portraits of the same person, having listened to her speaking Chinese and English. Anyway, in general, the ability of the algorithm to portray the human being is much greater than when trying to portray cats, as you can see in the image below.

What do you think? Do you like idea of an algorithm that can picture our faces from our voices? Or would it be better to preserve the 'anonymity of audio'? Tell us your opinion in the comments below.

Source: LiveScience

Awesome AI Technology